by CPI Staff | Sep 15, 2025 | Blog, LLM, Phi-3

In this blog post Alpaca vs Phi-3 for Instruction Fine-Tuning in Practice we will unpack the trade-offs between these two popular paths to instruction-tuned models, show practical steps to fine-tune them, and help you choose the right option for your team. Instruction...

by CPI Staff | Sep 15, 2025 | Blog, Neo4j, RAG

In this blog post Understanding Word Embeddings for Search, NLP, and Analytics we will unpack what embeddings are, how they work under the hood, and how your team can use them in real products without getting lost in jargon. At a high level, a word embedding is a...

by CPI Staff | Sep 15, 2025 | AI, Blog, LLM

In this blog post Preparing Input Text for Training LLMs that Perform in Production we will walk through the decisions and steps that make training data truly useful. Whether you’re pretraining from scratch or fine-tuning an existing model, disciplined data prep is...

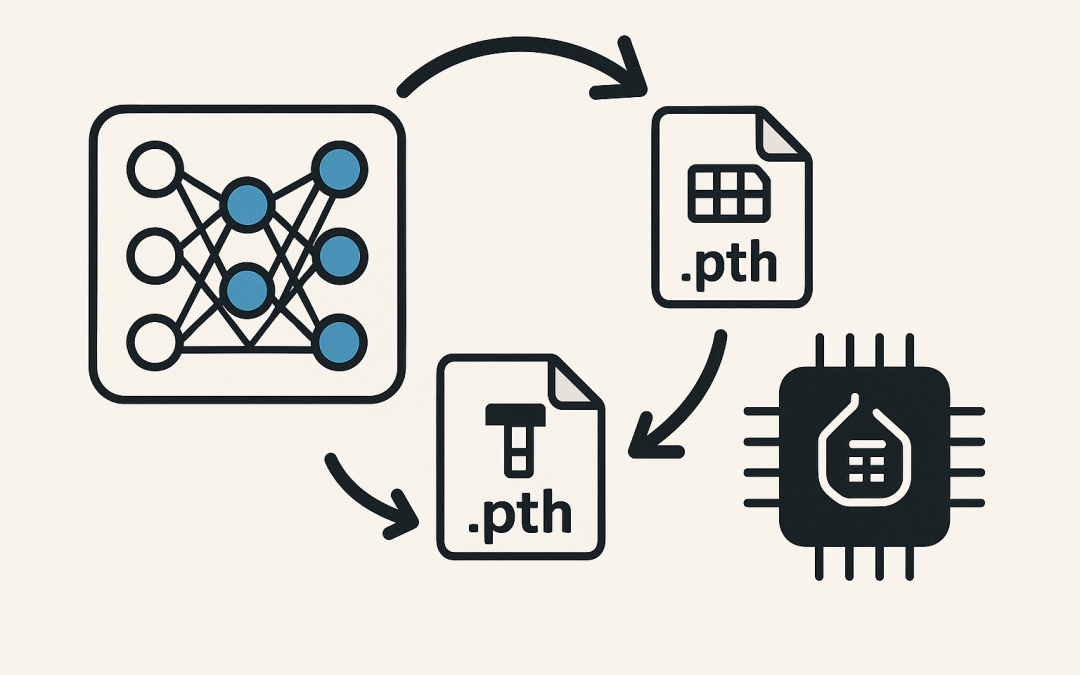

by CPI Staff | Sep 15, 2025 | AI, Blog, LLM, PyTorch

In this blog post Best Practices for Loading and Saving PyTorch Weights in Production we will map out the practical ways to persist and restore your models without surprises. Whether you build models or manage teams shipping them, understanding how PyTorch saves...

by CPI Staff | Sep 15, 2025 | AI, Blog, LLM

In this blog post Practical ways to fine-tune LLMs and choosing the right method we will walk through what fine-tuning is, when you should do it, the most useful types of fine-tuning, and a practical path to ship results. Large language models are astonishingly...

by CPI Staff | Sep 15, 2025 | Azure, Blog, Phi-3

In this blog post Understanding Azure Phi-3 and how to use it across cloud and edge we will unpack what Azure Phi-3 is, why it matters, and how you can put it to work quickly and safely. Think of Azure Phi-3 as a family of small, efficient language models designed to...