In this blog post The 5 Biggest AI Agent Deployment Mistakes Mid-Size Firms Make we will explain what AI agents are, how the technology works in plain English, and the five mistakes that cause most deployments to stall, overspend, or create unnecessary risk.

AI agents are quickly moving from interesting demo to real business tool. For many mid-size companies, that creates a familiar problem. The board wants an AI plan, staff are already experimenting, vendors are promising dramatic productivity gains, and no one is quite sure where the real value starts or where the risk begins.

At a high level, an AI agent is more than a chatbot. A chatbot usually answers questions. An AI agent can go further. It can read information, follow instructions, make limited decisions, and complete tasks inside systems your team already uses, such as Microsoft 365, your service desk, or your customer database.

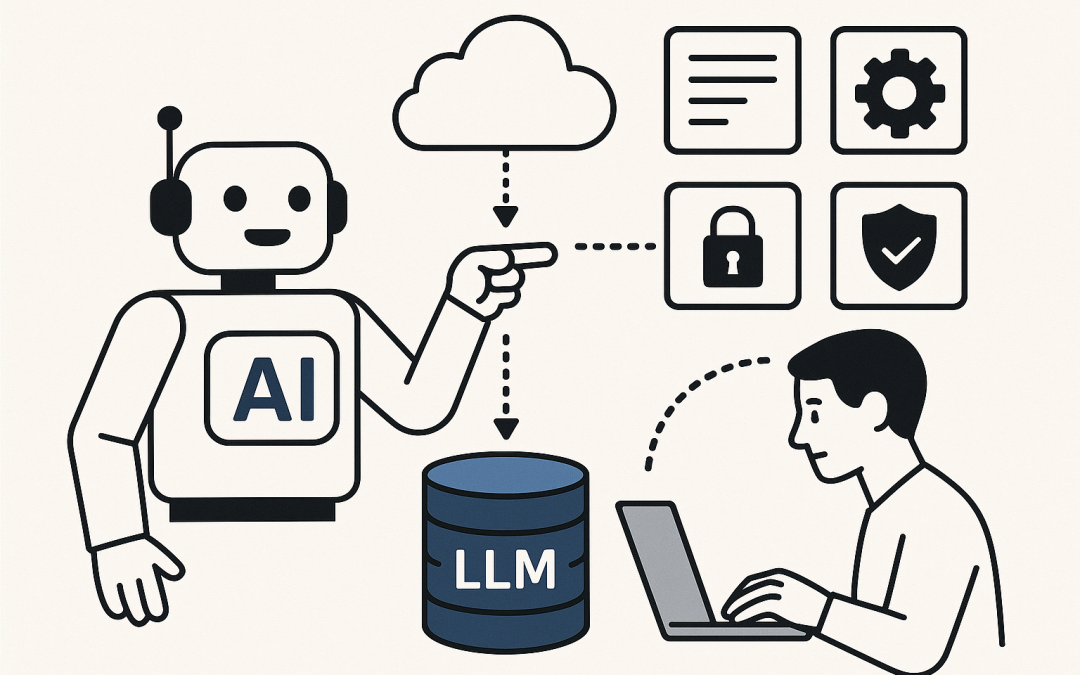

The technology behind an AI agent is fairly simple to understand. At its core is a large language model, which is the AI engine that reads, writes, and reasons with language. Around that engine sit four other parts: your business rules, approved company data, access to tools or systems, and guardrails that decide what the agent is allowed to do and when a human must step in. In other words, the model provides the brain, but the controls around it determine whether it is useful, safe, and worth the money.

That is why the biggest AI failures are rarely caused by the model itself. They usually come from poor planning, weak security, messy data, and unrealistic expectations. Here are the five mistakes we see most often.

1. Starting with the technology instead of the business problem

The first mistake is asking, How do we deploy an AI agent, before asking, What expensive or frustrating problem are we trying to fix?

This sounds obvious, but it is where many projects go wrong. Leadership gets excited about the idea of an agent. A few demos are shown. A pilot is approved. Then, three months later, the business still cannot answer a basic question: what result were we buying?

The better approach is to start with a painful process that is repetitive, slow, and measurable. For example, reducing time spent answering internal IT questions, speeding up sales proposal drafting, or cutting the admin involved in onboarding new staff.

If you cannot describe the problem in one sentence, the project is probably too vague. Good AI agent projects usually target one clear outcome, such as:

- reduce help desk ticket volume

- shorten invoice processing time

- improve response speed for customer enquiries

- help staff find approved policies faster

Business outcome: less wasted spend, faster time to value, and a much better chance of user adoption.

2. Giving the agent access to too much, too soon

This is the mistake that keeps risk and compliance teams awake at night. An AI agent is only as safe as the data and permissions behind it. If your existing access controls are messy, the agent can expose that mess very quickly.

For example, if staff in Microsoft 365 can already see files they should not see, an agent connected to that environment may help them find those files even faster. The problem is not that the AI became dangerous on its own. The problem is that it inherited weak controls from the systems around it.

Australian businesses also need to think carefully about privacy obligations, sensitive customer data, employee records, confidential commercial information, and where data is stored or processed. If an agent can access everything, it can also spread mistakes at speed.

This is why AI projects should sit alongside core cyber security, not outside it. Essential 8, which is the Australian Government’s cyber security framework, still matters. So do identity controls, device security, data classification, audit logs, and clear approval pathways. In practice, that often means starting with tightly limited access, approved knowledge sources, and clear human review points before you expand further.

Business outcome: lower security risk, fewer compliance headaches, and less chance of an expensive clean-up later.

3. Trying to automate a broken process

Many companies hope AI agents will clean up years of inconsistent processes. In reality, agents do not fix chaos. They usually amplify it.

If your onboarding process lives across six spreadsheets, three inboxes, and a manager’s memory, an agent will struggle. If your customer pricing rules are inconsistent, your document naming is a mess, or your policies are outdated, the agent has no reliable foundation to work from.

This is one of the least glamorous parts of AI deployment, but it matters more than the model choice in many cases. Before you automate a workflow, make sure the workflow is actually worth automating. Standardise the steps. Clean up the source documents. Decide which system holds the official version of the truth.

A good rule of thumb is this: if a capable new employee would find the process confusing, your AI agent will too.

Business outcome: better accuracy, less rework, and a stronger return on your AI investment.

4. Expecting full autonomy on day one

There is a lot of hype around agents acting like digital employees. That vision is useful, but only if you deploy it in stages. One of the biggest mistakes companies make is expecting an agent to run an end-to-end business process without meaningful human oversight from the start.

That is rarely the smartest first move. A better approach is to begin with assisted work, not fully autonomous work. Let the agent draft the email, prepare the summary, suggest the next action, or assemble the report. Then let a human approve it.

This matters most in finance, HR, legal, procurement, and customer communication. If an agent sends the wrong contract language, approves the wrong payment, or gives a customer an incorrect answer with confidence, the cost of the mistake can wipe out months of productivity gains.

Human-in-the-loop simply means a person stays involved at key points. For non-technical leaders, that is often the safest and most profitable model. You still save time, but you keep judgement where judgement belongs.

Business outcome: faster adoption, higher trust from staff, and fewer high-impact errors.

5. Failing to assign ownership, governance, and success measures

Many AI agent projects start as innovation experiments and stay there. No one owns the business result. No one reviews the risks regularly. No one defines success. Unsurprisingly, the project becomes a novelty instead of an operational improvement.

Every agent needs an owner on the business side and an owner on the technology and risk side. The business owner decides what good looks like. The technology and security owner makes sure the setup is safe, reliable, and maintainable.

You also need a simple scorecard. Not twenty metrics. Just a few that matter. For example:

- hours saved each week

- ticket resolution time

- proposal turnaround time

- error or escalation rate

- staff usage and satisfaction

Without measurement, AI always feels promising and never proves itself. Without governance, it also spreads quietly through the business in inconsistent ways, with different teams using different tools, data, and rules.

Business outcome: clearer ROI, better executive visibility, and fewer shadow AI tools creating risk behind the scenes.

A realistic mid-size business scenario

Consider a 220-person professional services firm. Leadership wants one AI agent to answer staff questions, draft client proposals, and help with onboarding. It sounds efficient. But once the project starts, the cracks appear quickly.

Policies are spread across old folders. Pricing rules differ by team. Access permissions in the document system are inconsistent. HR documents contain sensitive employee data. No one agrees on which content is current. If the firm launches a broad agent at that point, it risks wrong answers, privacy issues, and low trust from staff.

The smarter rollout is much narrower. Start with a proposal assistant that only pulls from approved templates, case studies, and pricing guidance. Keep human approval in place before anything goes to a client. Measure how much drafting time is saved. Once that works, expand carefully into the next use case.

That is how strong AI programs usually succeed. Not with one giant leap, but with a sequence of controlled wins.

What a sensible AI agent rollout looks like

If you want AI agents to deliver real business value, a practical rollout usually looks like this:

- Pick one process with a clear cost, delay, or risk problem.

- Clean up the data and define the approved sources.

- Limit access and apply security controls from day one.

- Start with human review for important actions.

- Track measurable outcomes and expand only after success is proven.

That may sound less exciting than the big promises in the market. But it is how companies actually save money, reduce risk, and build confidence in AI.

For mid-size organisations, especially those already running on Microsoft 365, Azure, Intune, and Microsoft security tools, the quality of the rollout matters far more than the novelty of the demo. The winners will not be the businesses that moved first. They will be the ones that deployed carefully, securely, and with a real commercial outcome in mind.

At CloudPro Inc, that is the approach we believe in. Practical, hands-on advice. Clear business cases. Strong security foundations. And AI that earns its place in the business instead of creating more noise.

If you are considering AI agents but are not sure whether your current setup is ready, or whether the opportunity is real for your business, CloudPro Inc is happy to take a look and give you a straight answer with no strings attached.