Microsoft’s Deep Research in Copilot for Microsoft 365 has already reshaped how knowledge workers produce long-form analysis. Teams that used to spend days pulling together market scans, risk reviews, and competitive briefings now have a drafting partner that reasons over SharePoint, Outlook, Teams, and the open web in a single pass.

The newest addition — a Critique capability that reviews and challenges Deep Research outputs before they reach the user — sounds like a quality-of-life improvement. In reality, it changes the governance conversation entirely.

Organisations that treated Deep Research as “just another Copilot feature” now have a second AI layer inspecting the first. That matters for risk, compliance, and audit — and most governance frameworks have not caught up.

What the Critique Feature Actually Does

The Critique capability runs a second model pass over a Deep Research output. It looks for weak reasoning, unsupported claims, missing citations, and logical gaps, then either flags them to the user or rewrites the draft with corrections.

On the surface, this is a hallucination control. It is also much more than that.

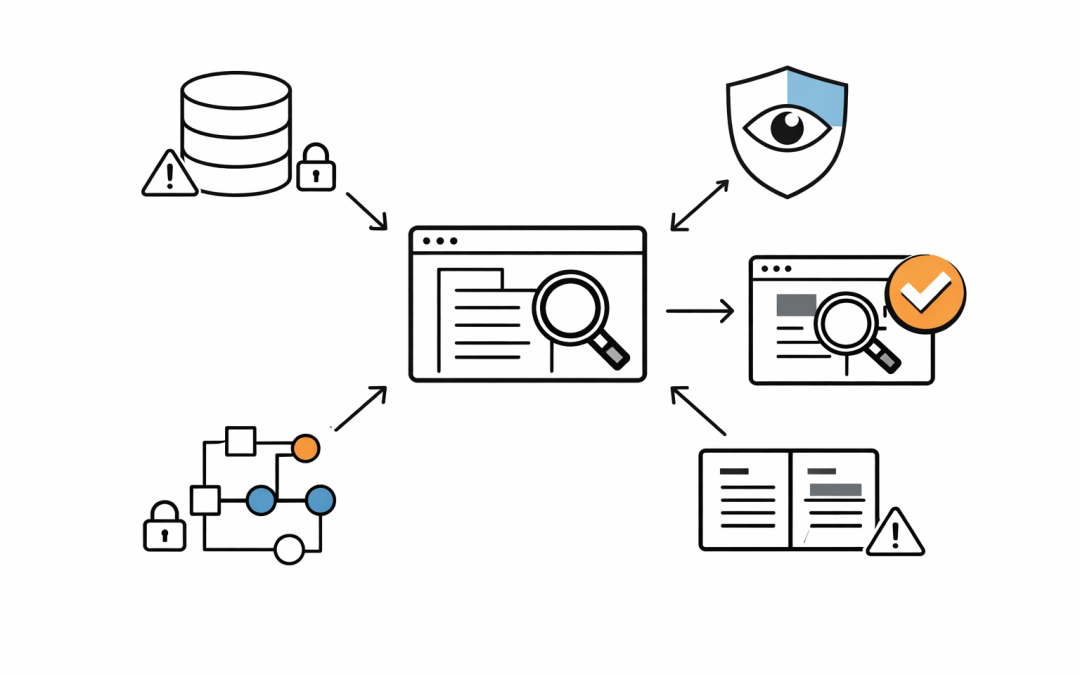

Critique sees the full research output, the underlying sources, and the reasoning chain. In governance terms, it is a second AI system with access to the same sensitive tenant data as the first — operating automatically, every time, with no separate consent prompt.

For a CIO or IT Director, the question is no longer “should we allow Deep Research?” It is “should we allow two AI layers to process the same sensitive content, and how do we prove that to an auditor?”

Why This Breaks Existing Copilot Governance

Most Microsoft 365 Copilot governance baselines were written for a single-pass architecture. The assumptions are straightforward:

- One prompt in, one response out.

- Data access is controlled by the user’s existing Microsoft 365 permissions.

- Purview handles audit and DLP.

- Responsible AI controls sit at the tenant level.

Critique breaks several of those assumptions quietly.

It introduces a second processing stage that may re-read sensitive documents, re-run queries, or pull in additional context to verify a claim. It also introduces a second decision point — the critique can change the substance of a Deep Research output without the user seeing the original.

If a legal team asks Deep Research to summarise a contract risk position, and Critique rewrites the risk language to sound more balanced, which version becomes the record? The one the user saw, or the one the first model produced?

This is the kind of question most organisations have not answered because they never needed to.

The Five Governance Gaps Most Organisations Have Right Now

Working with Australian mid-market clients on Microsoft 365 Copilot rollouts, the same gaps keep appearing when a feature like Critique is switched on:

1. No audit trail for multi-stage AI reasoning. Purview captures the prompt and the final response, but the intermediate critique pass is often invisible. For regulated industries, that is an audit problem waiting to happen.

2. No policy for AI-on-AI data access. Data Loss Prevention policies were designed for users and apps, not for a second model evaluating a first model’s output. Sensitive data that passes DLP in the first pass can be reprocessed in the second without the same scrutiny.

3. No defined accuracy standard. If Critique flags a claim as unsupported, who decides whether the user sees the flag, the rewrite, or both? Most organisations have not defined this, so the default behaviour wins by accident.

4. No model governance for the critique layer. Organisations often approve Copilot based on the specific model version in use. A critique layer may run on a different model — with different capabilities, training data, and failure modes. That needs to be tracked.

5. No user training on what Critique changes. Users assume the output they see is what Deep Research produced. In practice, it may be a critique-corrected version. That gap in understanding is where trust — and compliance — erode.

What Good Governance Looks Like

Organisations that get this right treat Critique as a distinct AI workload, not an invisible feature.

That means:

- Including Critique in the Copilot risk assessment, with its own data flow documentation.

- Extending Purview audit to capture the critique pass, not just the final response.

- Defining an accuracy standard for high-stakes Deep Research use cases — legal, financial, regulatory, board-level.

- Requiring users to see both the original and the critiqued version for any output that will be used externally or in a compliance context.

- Reviewing the critique layer’s model version and update cadence as part of quarterly Copilot governance reviews.

None of this is exotic. It is the same discipline that already applies to any automated decisioning system — applied to AI features that most organisations have been treating as consumer-grade productivity tools.

The Essential 8 Angle

For Australian organisations mapping AI controls to the Essential 8, Critique raises specific questions under Application Control and Restrict Administrative Privileges. A second model pass that can modify outputs is effectively a new application behaviour inside Microsoft 365. It should be risk-assessed the same way a new agent or connector would be.

The ACSC guidance on AI systems — still maturing but already pointing in a clear direction — emphasises transparency, auditability, and human oversight. Critique, left on its default settings, can reduce all three if governance is not updated.

The Shift CIOs Need to Make

The real change is not technical. It is mental.

Microsoft 365 Copilot is no longer a single AI feature with a single governance posture. It is a stack of AI capabilities — Deep Research, Critique, agents, connectors, reasoning models — each with its own data access, decision authority, and failure mode.

Governance that treats all of it as “Copilot” will miss the interesting risks. Governance that maps each layer separately will catch them early and keep the productivity benefits intact.

Critique is a useful feature. Organisations that govern it well will get higher-quality AI outputs and a stronger audit position. Organisations that ignore it will discover both problems at once — usually during a regulator’s review, or a board question nobody wanted.

Cloud Pro Inc. helps Australian organisations design and implement Microsoft 365 Copilot governance that keeps pace with new features like Critique and Deep Research. If your Copilot rollout has moved faster than your governance, we can help you close the gap before it becomes a compliance issue.