On April 8, Meta Superintelligence Labs unveiled Muse Spark — their first model in a new Muse series — and framed it as the opening move toward “personal superintelligence.” The pitch is bold: an AI assistant that does not just answer questions, but understands your world because it is built on your relationships, your content, and your context across Instagram, Facebook, WhatsApp, and Messenger.

For CIOs evaluating enterprise AI strategy, that pitch deserves a closer look before the excitement takes hold.

What Muse Spark Actually Is

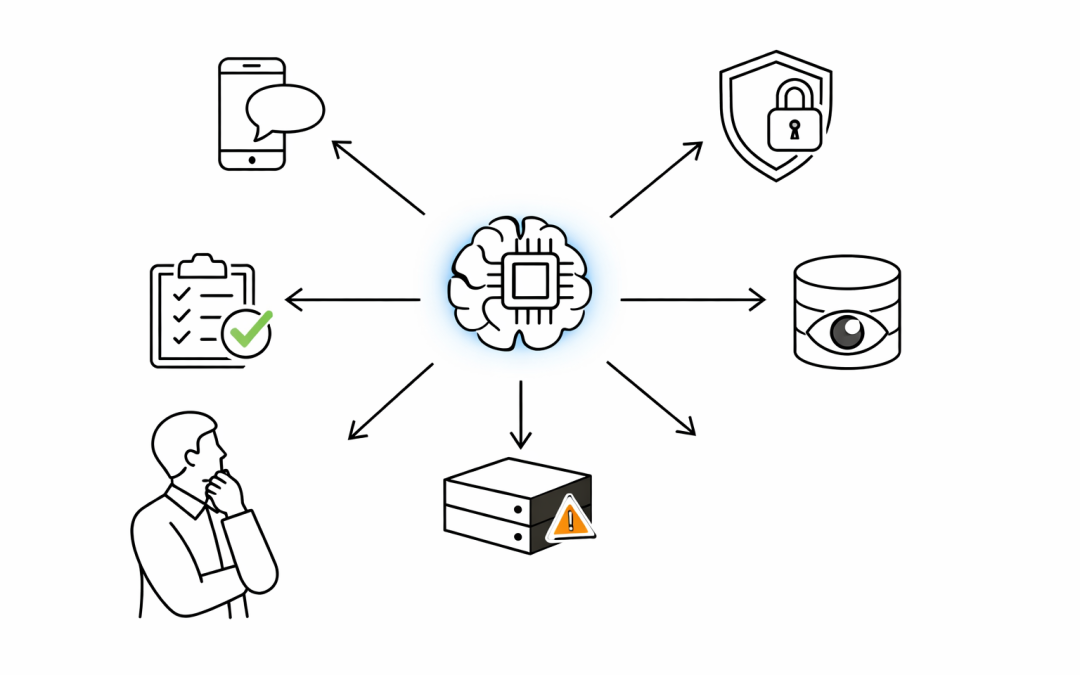

Muse Spark is a natively multimodal reasoning model built from the ground up by Meta Superintelligence Labs. It supports tool-use, visual chain of thought, and multi-agent orchestration — meaning it can spin up parallel sub-agents to tackle different parts of a complex task simultaneously.

The numbers are genuinely impressive. Meta claims over an order of magnitude improvement in compute efficiency compared to Llama 4 Maverick, their previous flagship. A new “Contemplating mode” orchestrates multiple reasoning agents in parallel, scoring 58 percent on Humanity’s Last Exam and 38 percent on FrontierScience Research benchmarks.

It currently powers the Meta AI app and meta.ai, with rollout planned across WhatsApp, Instagram, Facebook, Messenger, and Ray-Ban Meta AI glasses in the coming weeks.

The “Personal Superintelligence” Claim

Meta’s framing is deliberate. They are not calling Muse Spark an assistant or a chatbot. They are calling it the first step toward personal superintelligence — an AI that “truly understands your world because it is built on it.”

That world, in Meta’s case, means the content people share across its platforms. Shopping recommendations draw from creators and communities users already follow. Search results surface public posts from locals. Health reasoning was developed with over 1,000 physicians. The model is purpose-built for Meta’s ecosystem, not for general enterprise deployment.

This distinction matters for any CIO considering where Muse Spark fits in their AI procurement roadmap.

Question 1: Where Does Your Enterprise Data Actually Go?

Muse Spark is designed to understand users by drawing on their social graph, their content, and their interactions across Meta’s platforms. That is powerful for consumer use cases. For enterprise scenarios, it raises immediate questions about data boundaries.

When employees use Meta AI through WhatsApp or Messenger — apps already common in Australian workplaces — what data feeds the model’s “personal understanding”? Where does the boundary sit between consumer convenience and enterprise data governance?

Australian organisations operating under the Privacy Act 1988 and the Australian Privacy Principles need clear answers before endorsing any tool that blurs personal and professional contexts. The fact that Muse Spark is explicitly built to leverage cross-platform context makes this question more urgent, not less.

Question 2: What Happens When the Model Is Not Open-Source?

Meta built its AI reputation on open-source with the Llama series. Muse Spark breaks that pattern. The model is closed, available only through Meta’s own products and a private API preview for select partners.

For enterprises that invested in Llama-based architectures — fine-tuning models on their own infrastructure, maintaining control over weights and deployment — Muse Spark represents a strategic pivot. Meta has stated they “hope to open-source future versions,” but hope is not a procurement guarantee.

This matters for Australian mid-market organisations that need predictable vendor roadmaps. A closed model tied to a consumer platform is a fundamentally different proposition than an open model running on your own cloud tenant.

Question 3: Is “Personal Superintelligence” What Your Organisation Actually Needs?

The capabilities are real. Multimodal perception, parallel agent orchestration, health reasoning, and visual coding are genuine advances. But the framing — personal superintelligence — reveals the intended user: consumers, not enterprises.

Enterprise AI needs are different. Organisations need models that integrate with existing security controls, respect data classification policies, support audit trails, and operate within compliance frameworks like the Essential Eight.

The risk for CIOs is conflation. When a compelling consumer AI product enters the workplace through the back door — via employees already using WhatsApp and Instagram — shadow AI adoption accelerates. The model does not need enterprise approval to start shaping how people work. It just needs a smartphone.

What This Means for AI Strategy

Muse Spark is a significant technical achievement that validates Meta’s scaling trajectory. The compute efficiency gains alone signal that Meta is serious about competing with OpenAI, Google, and Anthropic at the frontier.

But technical capability and enterprise readiness are not the same thing. CIOs should watch Muse Spark closely for what it reveals about the direction of consumer AI — and use that insight to strengthen their own AI governance posture.

Practical steps worth considering now:

- Audit Meta AI usage across WhatsApp, Messenger, and Instagram within the organisation

- Update acceptable use policies to address AI assistants embedded in consumer messaging platforms

- Revisit vendor lock-in assessments for any Llama-based deployments, given Meta’s shift toward closed models

- Ensure AI governance frameworks explicitly cover consumer AI tools that employees adopt without formal procurement

The organisations that manage AI adoption proactively — rather than reacting after consumer tools are already embedded in workflows — will be the ones that extract value without accumulating risk.

Our team works with Australian mid-market organisations to build AI strategies that balance innovation with governance. If Muse Spark has prompted questions about your AI posture, we are here to help.