The three dominant AI vendors are no longer competing on model benchmarks alone. They are competing to become permanent infrastructure inside the enterprise.

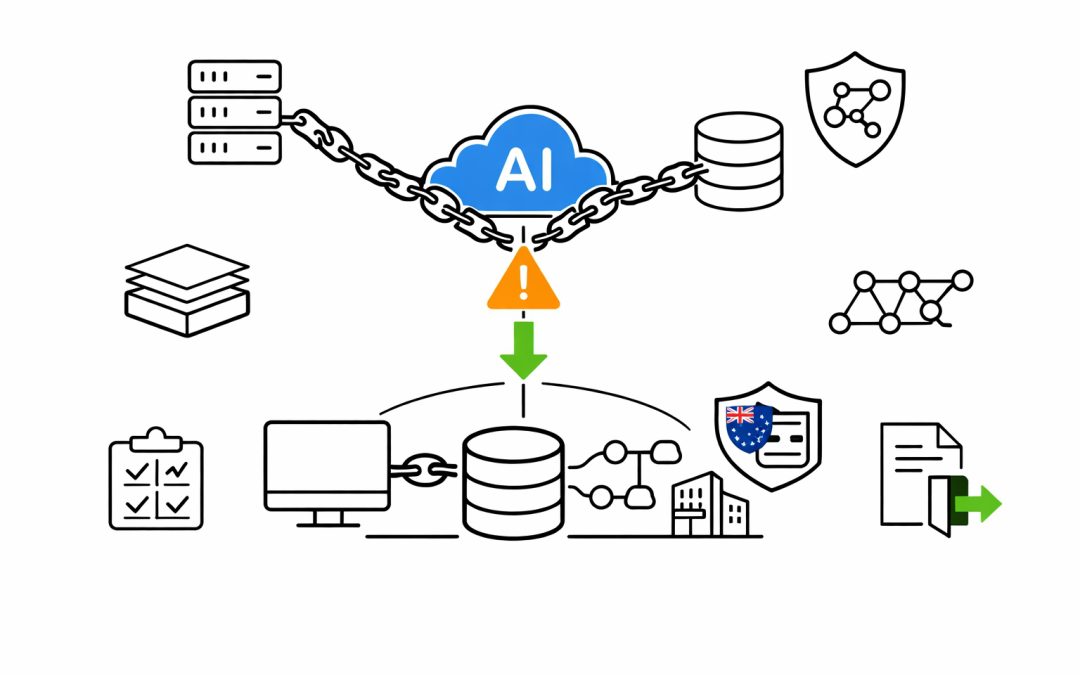

And most mid-market organisations are walking into these relationships without a vendor risk strategy.

The Lock-In Is Accelerating

In the past 90 days, the enterprise AI market has moved faster than most CIOs expected.

Anthropic launched the Claude Partner Network with a $100 million investment, training 30,000 Accenture professionals and certifying solution architects to embed Claude into enterprise workflows. Their run-rate revenue surpassed $30 billion, with over 1,000 business customers each spending more than $1 million annually. Claude is now available on AWS Bedrock, Google Cloud Vertex AI, and Microsoft Azure Foundry — the only frontier model on all three major clouds.

OpenAI closed a $122 billion raise and reported that enterprise revenue now exceeds 40 per cent of total income, on track to reach parity with consumer revenue by end of 2026. Their APIs process more than 15 billion tokens per minute. The company is building what it calls a “superapp” — a unified agent-first experience combining ChatGPT, Codex, browsing, and agentic workflows into a single platform designed to become the default interface for knowledge work.

Google continues to deepen Gemini’s integration across Workspace, Cloud, and its developer ecosystem. Gemma 4 open models expand the developer surface area, while Vertex AI consolidates enterprise model serving, fine-tuning, and deployment under a single control plane tied to Google Cloud billing.

Each vendor is pursuing the same strategy: make their AI the path of least resistance for enterprise teams, then make switching increasingly expensive.

Why This Matters for Mid-Market Organisations

Large enterprises have dedicated vendor management teams, legal departments experienced in technology contracts, and the leverage to negotiate custom terms.

Mid-market organisations — the 50 to 500 employee businesses that form the backbone of the Australian economy — typically do not. They adopt what works, sign annual agreements, and build workflows around a single vendor’s tools before anyone maps out the dependency chain.

The risk is not that any one vendor is bad. The risk is that without deliberate planning, an organisation can reach a point where switching AI providers would require rewriting applications, retraining staff, migrating prompt libraries, rebuilding integrations, and renegotiating data agreements — all at once.

That is vendor lock-in. And in mid-2026, it is happening faster with AI than it ever did with cloud infrastructure.

Five Practical Steps to Manage AI Vendor Risk

1. Separate the Model Layer from the Application Layer

The most effective defence against lock-in is architectural. Build internal AI capabilities using abstraction layers that sit between the application and the model provider.

If the business logic talks directly to a vendor-specific API with vendor-specific prompt formats, switching costs are high. If there is an intermediary layer — an internal gateway or orchestration service — swapping the underlying model becomes an operational task rather than a rewrite.

2. Negotiate Data Rights Before You Start

Every enterprise AI agreement should address three questions upfront: Who owns the data used for fine-tuning? What happens to that data when the contract ends? Can the vendor use interaction data to improve their models?

These are not hypothetical concerns. As AI vendors scale, the data flowing through their platforms becomes a competitive asset. Our team routinely sees organisations well into deployment before anyone asks these questions.

3. Run Multi-Vendor Pilot Programmes

Testing a single vendor against a business use case is standard. Testing two or three vendors against the same use case is smarter.

Multi-vendor pilots reveal not just which model performs best today, but how each vendor’s ecosystem works — their support model, integration patterns, pricing trajectory, and how easily outputs can be reproduced on a different platform.

The cost of running a parallel pilot is modest. The cost of discovering lock-in after 18 months of production use is significant.

4. Track Your AI Dependency Register

Most organisations maintain asset registers, software inventories, and cloud resource catalogues. Very few maintain an AI dependency register.

This should capture which AI vendor powers which workflow, what data each integration accesses, which teams rely on it, and what the estimated migration effort would be if that vendor’s terms, pricing, or capabilities changed.

This is not bureaucratic overhead. It is the same discipline that mature organisations apply to any critical supplier — and in 2026, AI models are becoming exactly that.

5. Build Exit Clauses into Every Agreement

No vendor relationship should begin without an exit plan. AI contracts should include data portability provisions, reasonable notice periods, transition support commitments, and clear terms around fine-tuned model ownership.

If a vendor resists these terms, that resistance is itself a signal about how they view the relationship.

The Australian Context

For Australian organisations, AI vendor risk intersects with existing compliance obligations. Data residency requirements, the Privacy Act, and sector-specific regulations such as APRA CPS 234 for financial services all apply to AI-processed data.

The Australian Signals Directorate’s Essential Eight framework does not yet explicitly address AI vendor management, but its principles around patching, application control, and restricting administrative privileges all extend naturally to AI platform governance.

Organisations that get ahead of this — treating AI vendor risk with the same rigour as cloud vendor risk — will be better positioned when regulation inevitably catches up.

Moving Forward Without Getting Locked In

The AI capabilities available today from Anthropic, OpenAI, and Google are genuinely transformative. No sensible strategy involves avoiding these tools.

But adopting them without a vendor risk framework is the enterprise equivalent of building a house on land you do not own. It works until it does not.

The organisations that will thrive through the next phase of enterprise AI are those that adopt aggressively and manage deliberately — choosing vendors with eyes open, building for portability, and negotiating from a position of informed strength.

CPI Consulting helps mid-market Australian organisations adopt AI platforms while building practical safeguards against vendor concentration risk. If your team is evaluating AI vendors or already deeply committed to one, a structured vendor risk review can identify exposure before it becomes a constraint.